Misinformation Policies for Two Social Giants

As social media has become such an integral part of our lives, efforts to fill it with misinformation have also increased. Whether companies are trying to sell you their products over their competitors, or government-backed organizations are trying to influence elections and laws. While it is ultimately our responsibility to vet and validate the information we take in, the platforms that distribute the information also have a responsibility to ensure that the information on their platforms is accurate and trustworthy.

Through the rules and policies a given platform has on misinformation, they set the baseline for what information can be posted and spread. These policies are, by their nature, not always perfect, but should be clear and effective enough to identify and get rid of any information that is deliberately trying to harm in some fashion. The two platforms I would like to analyze for their policies on misinformation are YouTube and Instagram.

YouTube’s policies, outlined here, target three categories of content that it sees as misinformation. They are “suppression of census participation, manipulated content, and misattributed content.” YouTube does provide exceptions for these rules, though, for content that is “educational, documentary, scientific, or artistic content.” This exception allows creators to talk about and educate others on misinformation without fear of being banned, so long as the information also provides additional information and context about the misinformation. While YouTube does not outline how they use technology to identify misinformation, they do provide instructions on how anyone can report videos as misinformation to be reviewed. One helpful note that YouTube also provides is that its policies apply to external links that creators use throughout their content.

Instagram’s policies, located here and here, are much more thorough and outline specific types of content it considers misinformation. It categorizes the types of content it considers misinformation into three sections as well: “Physical Harm or Violence, Harmful Health Misinformation, and Voter or Census Interference.” Instagram also states that it relies heavily on its community, as well as on third-party fact-checkers certified by the non-partisan International Fact-Checking Network, to facilitate the identification of misinformation and provide “community notes” to posts that have proven false information so that other viewers can see what is really going on.

As far as how these policies are implemented, a few examples are shown below.

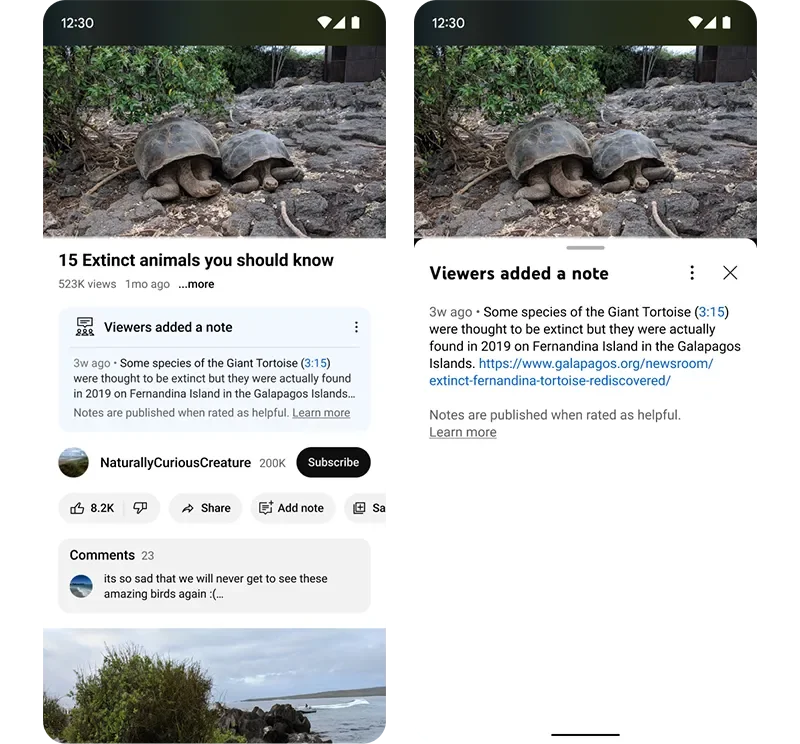

YouTube’s Notes

For YouTube, while I could not find any real-world examples since it seems their tests have either concluded or I was just unlikely to find any videos with these notes, YouTube did recently test out community notes, as shown here in their own blog. But beyond community notes, as described in their policies, they will either remove videos containing false information or, if a channel is a repeat offender, remove the channel and all its videos. I highlighted the external link clause earlier, as it has been a known issue with some creators in the enforcement of these rules. When creators provide links to external resources and later on, sometimes even years later, that link is changed to violate the rules without that creator knowing, YouTube may flag that link and use it as a basis for a ban or channel deletion, even though it was originally not an issue.

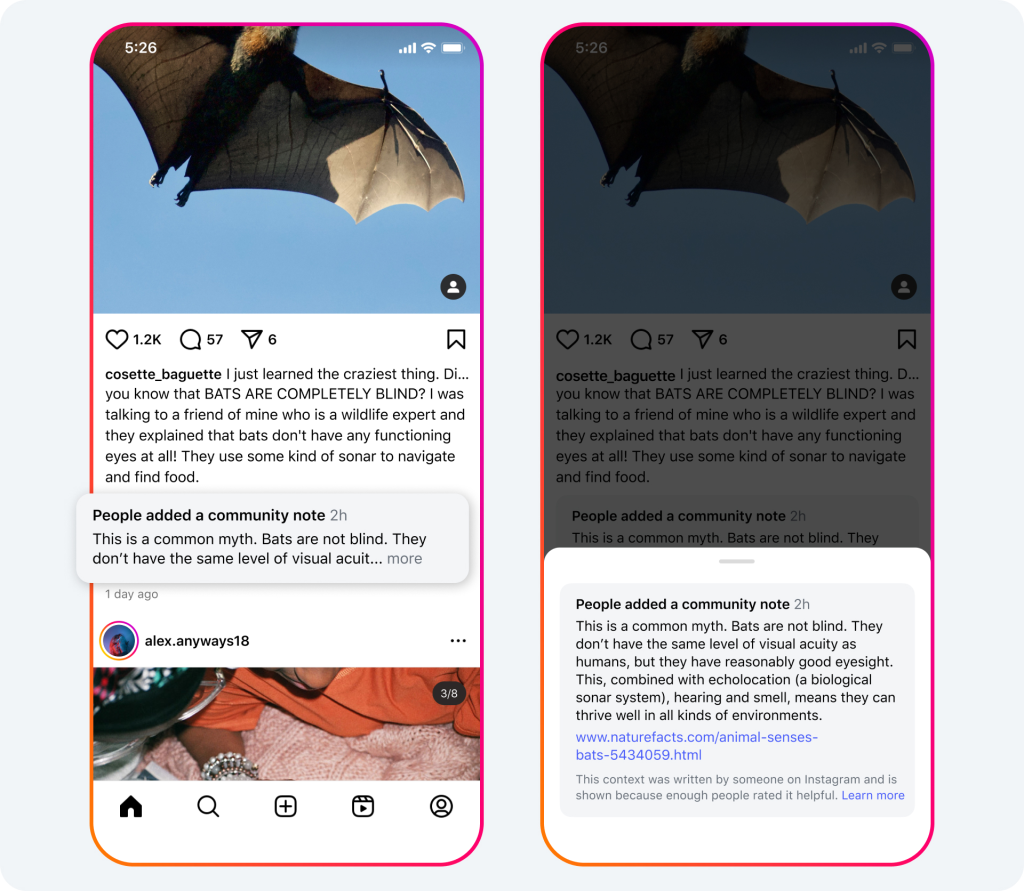

Instagram’s Community notes

Since Meta, the parent company for Instagram, has made such a large shift to community notes for all of its platforms, its program for it has become very robust and fleshed out. In Meta’s article on the rollout of community notes, found here, you can see how posts are identified, notes are written and voted on, as well as how the notes display on posts once they are approved by the community. One of the biggest drawbacks of this implementation of community notes, however, is the delay from when the content is posted to when a community note is published. Having recently joined the community note rating team, I have personally seen some notes take up to three days to be published on a post.

For both of these platforms, while their intentions may be good and their systems more robust than others, such as Twitter, now X, they are not perfect. The two issues I already talked about, YouTube’s external link issues and Instagram’s delayed publishing, can have delayed and negative effects on the system, leading to users not trusting the platform's ability to moderate its content. One other issue that Instagram faces specifically is its reliance on its community for voting on the community notes. Since it does not use verified fact-checkers as much as it used to, the possibility of harmful information being used in the community notes is still real.

The first idea that should be implemented to help alleviate these issues would be to bring back dedicated human content reporters and fact checkers to both platforms. With the improvements to artificial intelligence, many companies are switching to automated systems for content ID and fact-checking. While they may be faster than humans, they often make more mistakes. Human that are trained and dedicated to identification and fact-checking would allow for more thorough and nuanced checks, especially for content that is older or made for educational or entertainment purposes. The second idea, to help speed up the slow processes of voting and publishing, would be to have two separate flags on posts. One for posts that are quickly identified as questionable and one for known false information. The questionable flag could be raised by anyone and should be displayed immediately until enough people verify the information and can publish any note produced. This would allow posts that may go viral quickly to be seen by viewers as possibly an issue, instead of going days without ever being looked at again by most.